Optimization Techniques for the Intel MIC Architecture. Part 2 of 3: Strip-Mining for Vectorization

This is part 2 of a 3-part educational series of publications introducing select topics on optimization of applications for Intel’s multi-core and manycore architectures (Intel Xeon processors and Intel Xeon Phi coprocessors).

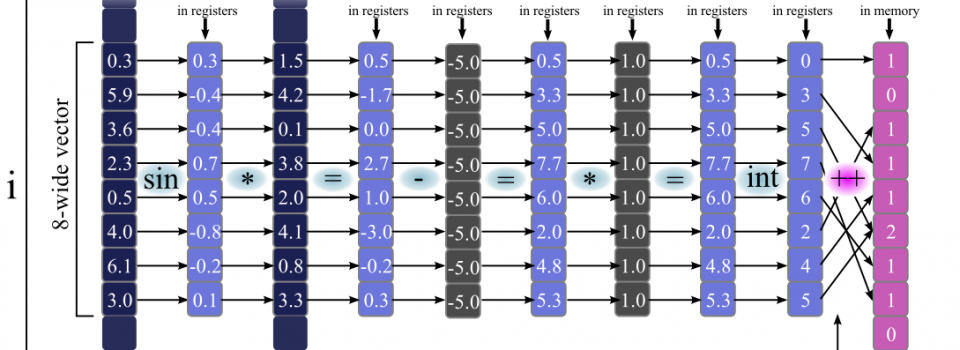

In this paper we discuss data parallelism. Our focus is automatic vectorization and exposing vectorization opportunities to the compiler.

For a practical illustration, we construct and optimize a micro-kernel for particle binning particles. Similar workloads occur applications in Monte Carlo simulations, particle physics software, and statistical analysis.

The optimization technique discussed in this paper leads to code vectorization, which results in an order of magnitude performance improvement on an Intel Xeon processor. Performance on Xeon Phi compared to that on a high-end Xeon is 1.4x greater in single precision and 1.6x greater in double precision.

See also:

- Part 1: Multi-Threading and Parallel Reduction

- Part 2: Strip-Mining for Vectorization

- Part 3: False Sharing and Padding

Complete paper: ![]() Colfax_Optimization_Techniques_2_of_3.pdf (650 KB)

Colfax_Optimization_Techniques_2_of_3.pdf (650 KB)

Source code for Linux: Colfax_Tutorial_Binning.zip (6 KB)