Large Fast Fourier Transforms with FFTW 3.3 on Terabyte-RAM NUMA Servers

This paper presents the results of a Fast Fourier Transform (FFT) benchmark of the FFTW 3.3 library on Colfax’s 4-CPU, large memory servers. Unlike other published benchmarks of this library, we study two distinct cases of FFT usage: sequential and concurrent computation of multithreaded transforms. In addition, this paper provides results for very large (up to N = 231) and massively parallel (up to 80 threads) shared memory transforms, which have not yet been reported elsewhere.

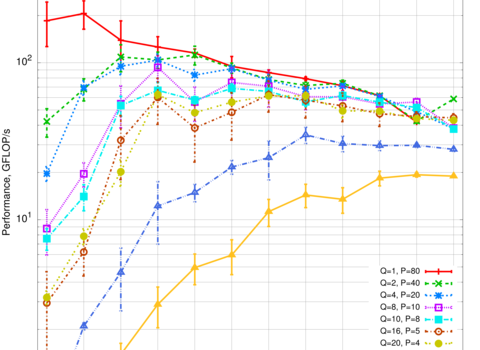

The FFT calculation is discussed: parallelization techniques and hardware-specific implementations; motivation for a specific astrophysical research is given. Results presented here include: dependence of performance on the transform size and on the number of threads, memory usage of multithreaded 1D FFTs, estimates of the FFT planning time. The paper shows how to optimize the performance of concurrent independent calculations on these large memory systems by setting an efficient NUMA policy. This policy partitions the machine’s resources, reducing the average memory latency. Such optimization is not specific to FFT algorithms, and can be useful for a variety of applications in large memory NUMA systems. Our conclusion is that the FFTW implementation of multithreaded one-dimensional FFTs scales very well with the number of threads for large transforms, but worse for small transforms. Having a large amount of shared memory in the system is beneficial for the performance of large concurrent FFTs, as it allows to reduce instruction-level parallelism in favor of task-level parallelism.

Complete paper: ![]() Colfax_Benchmark_Large_1D_FFTW_NUMA.pdf (294 KB)

Colfax_Benchmark_Large_1D_FFTW_NUMA.pdf (294 KB)